Cybersecurity

Cybersecurity can broadly be considered as the set of techniques that protect the confidentiality, integrity, and availability (commonly referred to as the CIA model) of computer systems and the information they contain. In an environment where networked devices are ubiquitous to businesses, organisations, and individuals, cybersecurity technologies play a role across every spectrum of computing.

Cybersecurity needs to constantly evolve in line with developments in computing capabilities and the threats that seek to take advantage of them. Where traditional approaches focused on protecting a network perimeter from known threats, the speed at which threats evolve today require more proactive cybersecurity strategies (De Groot 2020). Those traditional network perimeters are disappearing with the adoption of cloud-based services and remote work arrangements which has removed any separation between personal and organisation’s networks, and there has been commensurate increase in network activity as a result.

The COVD-19 pandemic has accelerated this trend, which has had implications to cybersecurity; Panetta (2021) reports that remote working is now available to 64% of workers, with 40% employing it. Another recent issue is the growth of the Internet of Things (Iot), which has seen the creation of millions of connected devices, each with their own security implications and cybersecurity challenges.

Considering the contemporary environment, cybersecurity practices acknowledge that not all threats can be feasibly stopped outside the network. Behaviour analytics is a technology that cybersecurity professionals have utilised to proactively protect against unknown threats, such as zero-day attacks, unauthorised access, or insider attacks. Behaviour analytics examines the trends, patterns and activities of users, applications, and networks to detect threats in real-time (Incognito Forensic Foundation 2019).

Using specialised algorithms and machine-learning technology, behavioural analysis looks for anomalies across network activity or in user’s behaviour (Greengard n.d.). It analyses interactions of applications with systems to predict malicious attacks based on that activity, rather than needing to identify known historic malware code, the method which traditional systems rely on (Forcepoint n.d.).

Utilising behaviour analytics in cybersecurity can be resource intensive, and research indicates that current implementation suits larger enterprises (Figueiredo 2019). Despite this, the technology also shows signs of creating new efficiencies. Hamblen (2016) reports realised efficiencies with the reduced need for IT teams to review lengthy event logs to identify incidents. Using behaviour analytics technology enables the quick identification by drilling down to the specific event. This efficiency will be particularly valuable to smaller enterprises with generally smaller IT teams with competing priorities.

Another tool that experts are leaning on to tackle modern cybersecurity challenges is hardware authentication. This is not a new technology, but advancements in cloud computing and the associated prevalence of remote work have brought it to the forefront as a valuable tool. The modern security landscape has been extended beyond the traditional siloed networks with known and controlled devices, and so authenticating network users has become of critical importance.

Traditional authentication asks ‘who the user is’ (username) and ‘what they know’ (password) (Incognito Forensic Foundation 2019). Adding an additional factor asking ‘what they have’ adds another layer of security that can mitigate the well understood deficiencies of the username and password (Mell n.d.). Technologies such as finger-print and facial detection have brought this to consumer-grade products, while tokens that generate one-time-passwords are common across business enterprises. The explosion of the IoT is another factor that has brought hardware authentication to the forefront. Managing machine identities has become a critical security capability to organisations employing connected devices and sensors.

Encryption and tokenization technology is a means that cybersecurity enables persistent access to authorised users over open networks and the cloud. Data in transit cannot feasibly be prevented from unauthorised interception without giving up the major benefits of cloud computing. Encrypting data allows information to be transmitted in a meaningless format, readable only to systems that hold a key (Net Diligence 2013). Tokenisation substitutes the data entirely, transmitting only a token that corresponds to the protected data which is held only by a trusted central agency (Acharjee 2021). This is particularly useful for electronic commerce payments, allowing payment information to be substituted for the token so that merchants don’t have access to customer’s credit information.

Quantum computing, while still nascent, poses a great issue for cybersecurity in the near future. One of the main security risks it poses is to cryptography, which as discussed above is a fundamental tool in modern computing. A quantum computer employed by adversaries, has the potential to defeat today’s encryption technologies (World Economic Forum 2020). While the fundamental notion that a technology can be used both to defend, or attack stands true with quantum computing, the immediate onus is on the defender to forearm themselves now, before the technology is in the hands of the attacker. As systems with long lifespans continue to be rolled out that will remain beyond the likely introduction of quantum computing, they must implement resistant technologies ahead of the hardware (World Economic Forum 2020).

These technologies will have huge impact to the cybersecurity industry. Workforce capacity will need to increase rapidly for risk of being a step behind technological advancements. The Australian Government Department of Industry, Science, Energy and Resources predict an 11% annualised growth of the domestic sector. In the immediate term, the sheer size of the security landscape will put ever increasing pressure on cybersecurity professionals. Technologies such as AI, machine learning, and automation must be continually evolved and employed to prevent security professionals from being overwhelmed by the dynamic and expanding nature of cybersecurity threats. Uses of these technologies, as is ubiquitous in cybersecurity, will also be employed by cyber criminals to counter any existing defences, and to further exploit systems (World Economic Forum 2019). Due to this paradigm, I do not foresee the efficiencies and developments that the technology enables reducing any of the burden on cyber security professionals. It will simply represent another tool that they must employ in order to remain competitive with their adversaries.

As students of Information Technology, cybersecurity can only play an increasing role in both our studies and future endeavours in the broader industry. The high-growth industry may present significant opportunities for today’s IT students. Perhaps more than other areas of IT, cybersecurity demands constant education to stay abreast of contemporary issues and developments, so will be an enduring topic over an IT professional’s career. The evolving cybersecurity industry and general move to SaaS tools does offer opportunity for smaller enterprises to outsource a significant portion of its cybersecurity needs. Service such as Extended Detection and Response (XDR) systems will take strides in centralising these needs, creating efficiencies for smaller security operations teams (Novinson 2021). These developments will likely mean a broader pool of IT professionals, not just cybersecurity experts, will have a touch point with security concerns, albeit through external providers.

For the non-IT professional, many of whom are now working remotely, they will be indirectly affected by the need to constantly evolve security technologies. In the immediate term, services traditionally using a username and password are introducing multi-factor authentication with a degree of increased complexity for the general computing user. I believe everyone will need to become more aware of the cybersecurity landscape. As technology itself plays an ever-pervasive role in our lives, cybersecurity will similarly play a larger part. Each user maintaining an awareness of the risks and dangers is an important factor in the integrity of the larger cybersecurity system. Of note, the ever-increasing complexity of these developments and cybersecurity’s impact on society place the elderly or technology-inexperienced at disadvantage, and place a responsibility on the community at large to protect them, just as with any vulnerable segment of society.

Blockchain and Cryptocurrency

Blockchain is a type of database. A database can be described as; “a collection of information that is stored electronically on a computer system” (Euromoney Learning 2021). This can be loosely related to a form of currency that can be stored virtually or online. A blockchain is simply a way of storing and recording information in the way of digital transactions.

If we think about a bank or a financial institution, their transactions are stored within their system. Our transactions are all monitored and traced. If we refer to the blockchain technology, the transactions are being stored on the blockchain itself and therefore makes it extremely difficult to manipulate, change or hack.

Cryptocurrency, a form of digital currency, is stored on the blockchain. It is secured by cryptography. This makes it almost impossible to counterfeit or double-spend (Frankenfield, 2021). This form of currency makes it difficult for users to become involved with fraud and other forms of financial criminal activity.

Cryptocurrency and the blockchain technology are said to have been created by a Japanese man called Satoshi Nakamoto. (ICAEW 2021) Although Mr. Nakamoto’s identity is still yet to be confirmed, cryptocurrency and the implementation of the first public ledger for transactions was created in 2009. (Haar 2021).

Mr Nakamoto created the first public ledger, Bitcoin. Bitcoin is a form of digital currency which operates free of any central control or has the oversight of banks or governments (Sparkes, 2021). Bitcoin is known as the mother of all cryptocurrencies and has more recently been called the ‘new gold’ (Wilkerson 2021). This allows the user, purchaser, or investor to access these cryptocurrency funds from anywhere in the world. All they require is a device with internet connection.

The cryptocurrency market is expanding at a rate that we have never seen before. (Lowy 2021) It is currently being used for payments for generic day-to-day basics such as going through a McDonald’s drive thru. (McEvoy 2021).

This new way to pay ranges from groceries to electric cars, to apps on the Windows store! You can pay over the internet with cryptocurrencies in exchange for goods and services in more places that you would expect. The most recent controversial form of financial payments in the form of cryptocurrencies is VISA joining the club. (White 2021).

Bitcoin’s energy consumption is the hot topic. According to the University of Cambridge, “the bitcoin network currently consumes about 80 terawatt-hours of electricity annually, roughly equal to the annual output of 23 coalfired power plants”. (McDonnell 2021).

The individual users and the companies that have utilised blockchains and cryptocurrencies also need to keep in mind the impact on everyday people, and the environment. With mining rigs becoming so powerful, the energy consumption is undesirable at a minimum. Another example of the environmental impacts is the current silicon shortage; which could have something to do with the boom in PC parts. (Baraniuk 2021) Between the supply and demand issues and the silicon shortage issues, the price of these parts has also experienced a major price hike, in turn affecting the likes of anyone who may be transitioning to working from home, for example. (Hopkins 2021).

At the end of 2020, Elon Musk, the Founder and CEO of Tesla purchased approximately 1.5 billion USD worth of Bitcoin. (Kovach 2021) This was not just a massive personal investment either; Mr Musk was venturing into accepting Bitcoin as payment for his company. (NDTV 2021) With cryptocurrencies being unregulated as a whole, it opens up opportunities for assets that were worth potentially billions of dollars to be liquidated overnight.

It is assumed that within the next decade, cryptocurrencies and blockchains will, with certainty, increase its prevalence and its value (Haar 2021). Thus, stating earlier, bitcoin is said to be the new gold. The requirements for banks and financial institutions may eventually be phased out and the requirement for manual labour could entirely be created through artificial intelligence (AI) and automation (Schmelzer 2021).

Cryptocurrencies are produced in a process called mining. This process produced more digital currencies where these then enter into circulation. These currencies can be mined in two ways; sophisticated hardware that solves complex mathematical calculations and also by new transaction that can be confirmed by the network. (Hong 2021).

The two most popular cryptocurrencies are Bitcoin and Ethereum, both of these currencies have the largest market capital. Market capital refers to the total money of everything in that industry. For example, every single Bitcoin in the world, times by the value of each coin. The current market capitalisation of all cryptocurrencies is well over 1 trillion dollars AUD. Bitcoin is also over a trillion dollars AUD in its entirety. (Trading View 2021) This is a very significant amount of money in today’s value.

This pushes companies such as NVIDIA, Intel, AMD etc. to produce more and more products such as GPU’s, RAM (Memory) and CPU’s, which alternatively can help speed this everchanging development in the micro and macro environment as well as boost the production of cryptocurrency.

Considering the massive advancements made in the realm of technology in the last few years, it is safe to assume that there will be even bigger advancements to come. This isn’t to say that there will be a replacement of banks any time soon, but we may see the trend of banking in general continue to decrease tremendously. (Celner 2021) The issue that stands between cryptocurrencies becoming the norm is accessibility; it is hard to imagine that cryptocurrencies will find a way to be accessible to those who are older or who lack technological skills. In saying that, accessibility could be a next big milestone.

The potential impact of the use of blockchains and cryptocurrencies will be significant. It has the potential to widely influence everyone in the community who has access to a bank account, necessary hardware and software and those with a smartphone. (Orcutt 2019).

The potential positive impact of these technologies will be the increase in demand, which in return could mean the creation of more jobs within this area. (Bradford 2017) On the opposite end of the spectrum, it could also potentially overwork the IT industry and the advancements with the constant requirements to keep up with the demand and potentially burn itself out.

With the advancements in AI and automation, we can assume that mining for crypto could become automated, resulting in just requiring physical aspects such as the hardware. It is said that AI and automation will eventually take over. On another note, there always needs to be a balance between AI and human interfaces as there will always be some aspects that a human will be required for. (Koval 2021).

Source: https://www.toptal.com/finance/market-research-analysts/cryptocurrency-market

Expanding on the previous point, we also need to prepare for the sudden disposability of a lot of roles throughout technology industries. We have already seen automation taking over in the ways of mass production for example, but this technology holds the potential to see even simple jobs overtaken by automation. In hindsight we need to be dynamic on how we adapt to everything around us, if we aren’t this could be detrimental to not only society, but our future. (O'Brien 2015).

There are many applications that aid users in keeping track of and accessing their finances, both through banks and applications or web browsers for cryptocurrencies. Apps such as Coinspot and Binance for example allow you to buy, sell and stake a range of cryptocurrencies. (Sharma 2021) These trading platforms also allow you to store your coins in an online storage system referred to as a “hot wallet”. (Frankenfield 2021) These apps include tonnes of features that allow you to analyse data and specific charts for hourly or monthly statistics. These applications have made the management of funds a breeze, but this was not always the case; for example, when you are required to fill out a paper application in-person for a loan from a bank or opening a bank account.

The impact of cryptocurrencies on the general public has already made a lasting impression. Cryptocurrencies have now become a major talking point with friends, family, the media, celebrities and with only recently, El-Salvador creating bitcoin as a national legal tender. (Bartholomeusz 2021).

Autonomous Vehicles

Autonomous driving is becoming more reality than science fiction of late, especially with some of the latest developments in the field. However, there’s still a long way to go before we start seeing fully autonomous vehicles on our streets. There is currently a race between companies to see who the first will be to develop an automated vehicle safe to let loose on the streets. Some notable companies, including Waymo, Uber, Toyota, Apple, and Tesla, are all working hard on autonomous vehicles.Waymo runs a service called Waymo One in Phoenix, Arizona, where customers can call a car and set a destination, with stops along the way if needed. This automated service is only possible in several cities in the Metro Phoenix area, working similarly to a taxi. This is due to the necessity for vigorous scanning of the city’s environment so that the automated vehicle can have a clear understanding of where it is at all times, as merely relying on GPS alone can be limiting in situations. They have also launched a Trusted Tester program in San Francisco, where they intend to launch Waymo One soon!

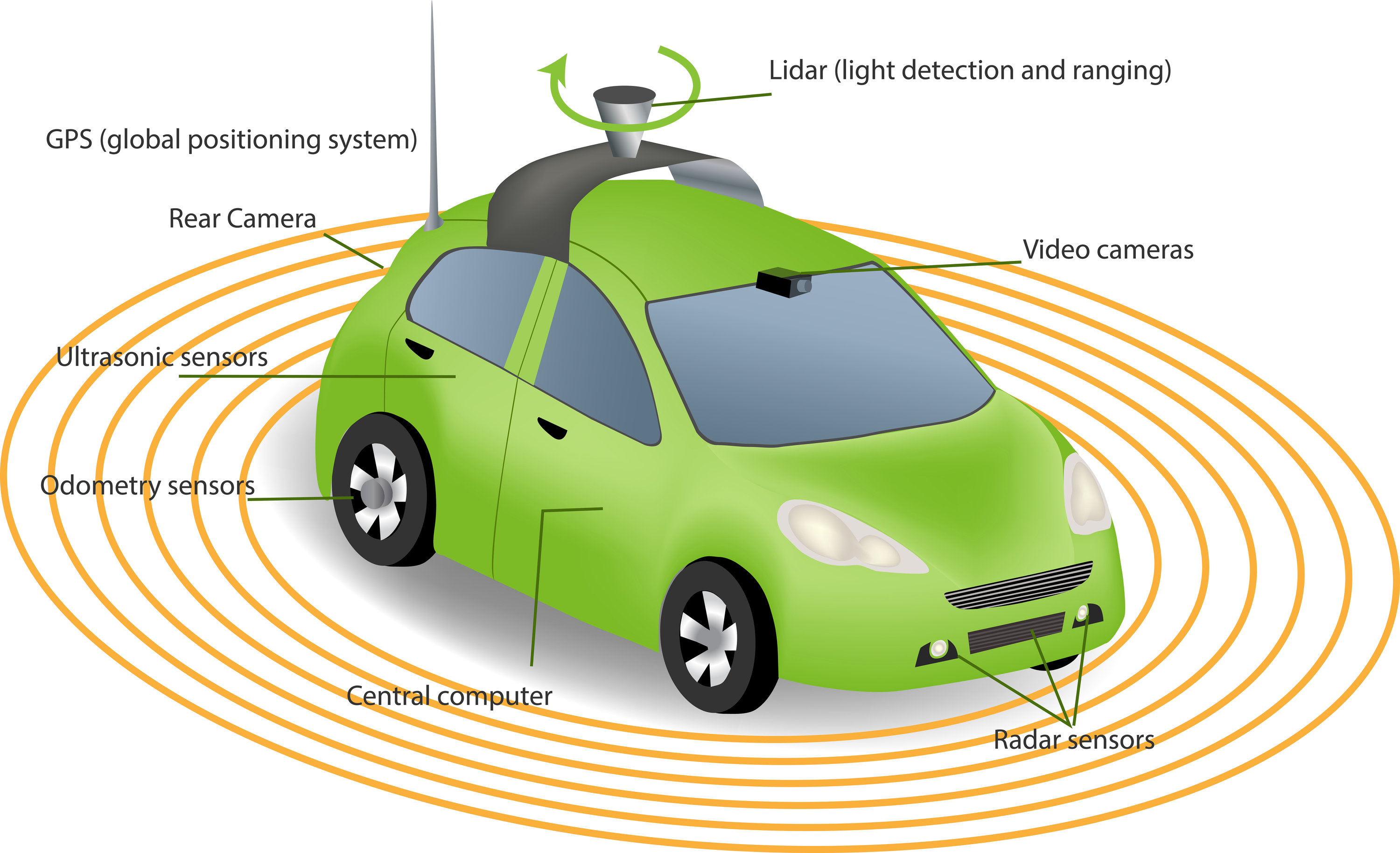

Using a complex mash-up of machine learning coupled with Lidar, Cameras and Radar, the computer within the vehicle can generate a picture of its surrounding environment. Based on the simulations and other driving the computer has previously done, it can make driving decisions. Following this principle, it’s vital to get a better autonomous driver that you give the computer as much practice as possible to help prepare it for any situation on the road.

Waymo, currently on their website, claims to have done millions of miles on public roads and billions of miles in simulations, which has helped their company get to where they are today. However, the road to autonomous vehicles isn’t all golden. Waymo has hit a speedbump in development, finding the computer struggles with minor disturbances such as cyclists, construction crews and pedestrians as well as rain or snow (Coppola & Bergen 2021). These are all obstacles that will need to be overcome before there’s any hint of a commercially viable autonomous vehicle rollout.

Tesla is another notable company with its well-known autopilot feature that uses multiple cameras, ultrasonic sensors, and radar to auto steer the car and automatically accelerate and brake. However, it is very limited in functionality and always requires the driver’s full attention by having their hands placed on the steering wheel to enable the feature.

Lidar is one of the tremendous advancements as of late in the autonomous vehicle sector! It’s essentially the eyes of the vehicle, which constantly rotate, sending thousands of laser pulses every second, which are reflected off the surrounding environment, then translated by a computer into a real-time 3D model. The computer can then interpret this information and, from its machine learning experience, judge how to react to the environment, whether that’s to stop, turn or accelerate (Choudhary 2020).

For the adoption of autonomous vehicles to work, some key things need to happen. First off, more practice. Machine learning requires large amounts of data to help the computer get better and better. The computer’s ability to drive would need to be exceptional to trump an individual just hopping in the car and driving themselves.

The cost is a significant factor too. We’ve already seen Lidar’s drop from costing around $75,000 in 2015 to $100 in 2021, being the size of a soft drink can (Dans 2021). Safety is a crucial factor in the successful rollout of Autonomous vehicles, so only time will tell, as teams keep training computers to become better and better drivers and as technologies become cheaper and more accessible for use.

Diagram of how LIDAR works Source: https://innovationatwork.ieee.org/lidr-is-the-latest-game-changing-advancement-for-autonomous-vehicles/

According to the World Health Organization’s report on road traffic injuries, approximately 1.3 million people die each year because of road traffic crashes (World Health Organization, 2021). These are often related to using mobile phones whilst driving, speeding, not using safety equipment (e.g., seatbelts or helmets) and drivers under the influence of alcohol and or illicit substances.

The hope with the development of Autonomous Vehicles is that it will remove or drastically lower the risk of human error, leading to fewer injuries and fatalities occurring on roads across the world. Another benefit of Autonomous Vehicles being introduced is for the elderly and those who have a disability preventing them from driving. This would give those with a disability much more freedom to continue travelling around to do the everyday things they love to do! This, however, would only be possible if Level 5 of Vehicle Autonomy was reached. Vehicles that are Level-5 capable are fully autonomous, with no driver required behind the wheel (APTIV, 2021).

Concerning jobs and the rise of autonomous vehicles, whilst some may become redundant, other, new jobs may also appear as a result. Particularly jobs in the truck driving and taxi sector will most likely become obsolete, as autonomous vehicles will be able to drive themselves. However, this does require Level 5 automation to be implemented, which is still a long way off.

Another factor to consider, as these high-tech vehicles come into existence, due to their complex nature, will require specialists to maintain them and perform repairs, which would require specialised training. There are also new jobs that could arrive, such as Teleoperation, which involves controlling vehicles from a control room, which is more likely to appear sooner rather than later as it doesn’t require the vehicle to be fully automated, Level 5 vehicle. The real impacts of Automated Vehicles will continue to be observed as further developments in the field are made.

I don’t think autonomous driving would have a massive impact on me until it was widely accepted worldwide and reached Level 5 vehicle automation. However, if we did get to that point, in terms of daily life, the workday commute would be much more peaceful, not having to deal with frustrating drivers and sketchy moments. I would sit back and relax, letting the computer do the driving, freeing up some time to ease myself into the workday. I think if this were the case, it would be a lot better for everyone’s mental health, especially for those who find driving stressful and scary.

Another significant benefit would be parking. Parking can be one of the most tedious things to do and sometimes finding a car park is harder. I’d imagine the autonomous vehicle, connected to other vehicles, would know where to find a free parking spot, making the experience so much quicker and less stressful for me, the passenger. However, something I would miss, and I know many others would, is driving itself. As stressful as driving can be, it is still enjoyable, especially on the more open, country roads.

Autonomous cars would be helpful for people like my grandparents! One of the main reason’s Nanna stopped driving is because she finds it too stressful on the roads. With more and more people being out on the roads and after some bad experiences, she decided to give it up. If autonomous vehicles were widely used, she would be so much more mobile, whilst her family still does pick her up and take her places she needs to, such as a doctor's appointment, at the push of a button!

The actual effects, good or bad, won't indeed be known until Autonomous vehicles are released on the streets. Until then, I am more than happy to drive myself!

Machine Learning

Machine learning is a branch of Artificial Intelligence that imitates the way a human brain operates and uses neural networks and experimentation to gradually improve upon its accuracy through the use of generations of data (IBM Cloud Education, 2020)(2020). This process takes a lot of time as the program begins in an infant state with every new subject it is learning, first the program must be given data, known as ‘training data,’ to identify parameters on which to operate and make decisions, it will then build on that database with each generation and learn whether it is doing the task correctly. The program will go through many generations before it starts to yield positive results, though with the processing speed of the computer considered and depending on the complexity of the task at hand, this process can take thousands of attempts to solve even the most basic of concepts.This technology has deep roots going back to 1952 where Arthur Samuel developed a program to learn the game of checkers using an alpha-beta pruning method, this would measure the chances of each side winning before making a play based on the minimax strategy, which would go on to be the minimax algorithm. At this time computers were much slower, and it wasn’t until 1962 when the computer program beat Robert Nealey, a self-proclaimed master, in a historical match (IBM Cloud Education, 2020)(2020).

On February 24, 1956, Robert Nealey versus a IBM 7094 computer in

the game of checkers

Source: https://www.ibm.com/ibm/history/ibm100/us/en/icons/ibm700series/impacts/

These days Machine Learning has an impact on many aspects of our lives, from Google Maps suggested traffic routes to shopping online with websites like Amazon. But some of the front runners for this technology reside in the field of medicine. Machine learning algorithms can sort through data from clinical trials and patient history, it will then compare that data to the latest research taken in that field and tailor a treatment protocol that best suits the patient's needs, saving the patient money and unnecessary tests (Project Pro, 2021)(2021).

Pfizer has been using Machine learning for years on its immuno-oncology research to sieve through the data to assist research in drug discovery, especially combining multiple drugs and deciding on the best participant for clinical trials. Machine learning has also been effectively utilised in the Banking sector, where they have algorithms that sift through thousands of transactions per customer to detect unusual activity and put a hold on fraudulent transactions. They also detect patterns of purchases and links to organised crime using this same technology. Using this technology has already saved customers thousands of dollars but is also assisting to protect the general public from criminals getting their hands on illegal weaponry.(Gavrilova 2020).

Moving forward into the not-so-distant future we can already see the likely impact of Machine Learning in our everyday lives, soon we will have robots or drones powered with Machine Learning taking full control of bomb disposal, which currently still requires human input to operate. Utilising these drones has already saved thousands of lives and will continue to save thousands more (Elite Data Science, n.d).

According to (Weissgraeber, 2021), a combination of Artificial Intelligence and Machine Learning will be giving rise to hyper-personalisation and greater customer experience within the e-commerce sector. Websites will create an algorithmic e-commerce experience where customers will receive customised shopping experiences akin to a personalised sales pitch. This shift in the market will provide a greater customer experience and deliver more value to each customer based on their needs at any given moment.

Machine learning will likely impact the world drastically in the near future, as technology improves, policies, regulations and laws will all need to be updated to accommodate the shift. In the e-commerce sector, privacy will be one of the biggest concerns, utilising customers data as they already do borders on ethical rights, but with additional Machine Learning technology sifting through even more data, it will become a necessity for this information to be even more protected. Leading to more roles in cybersecurity, better infrastructure in data servers and better privacy laws.

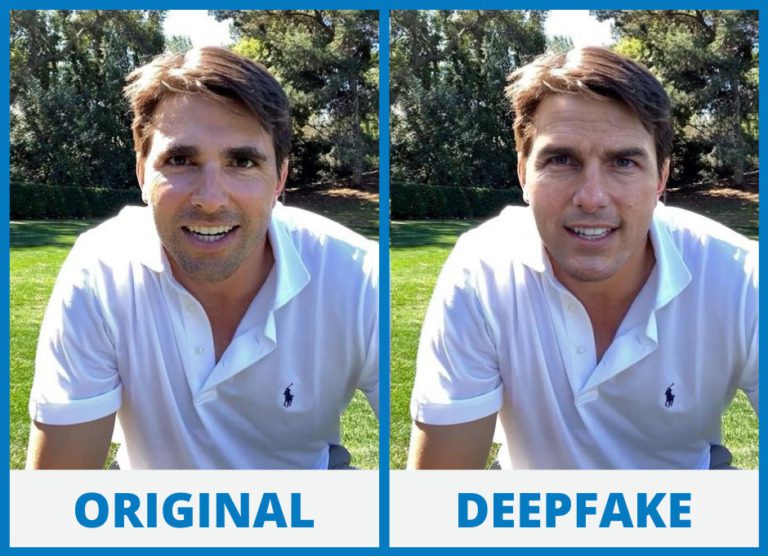

One major concern that doesn’t seem to be of concern yet, but still warrants the discussion. Is the possibility of Technological Singularity, also referred to as superintelligence, where an AI surpasses human intelligence and can be comparatively competent in creativity, wisdom, and social skills (IBM Cloud Education, 2020). The technology for deepfakes is getting close to this point from a media perspective, already it is difficult to tell the difference between the original and a fake unless you know what to look for. Moving into the future this will only get harder and press conferences and mainstream news outlets may become the only reliable source for current events for fear of an impersonator.

Source: https://cybersafetycop.com/deepfakes/

According to (Deshmukh, 2021), the 12 jobs most likely to be replaced by AI and Machine Learning are customer service executives, bookkeeping and data entry, receptionists, proofreading, manufacturing and pharmaceutical work, retail services, couriers’ services, doctors, soldiers, taxi and bus drivers, market research analysts and security guards. Instead of taking away these roles completely, it should result in a shift in the market with most of these roles being supplemented with Machine Learning technology.

Machine learning and Artificial Intelligence is set to change the world as we know it and already has subtle impacts on our daily lives, but I can see these impacts already becoming more pronounced as the technology improves. I can already picture a future where your home computer knows what website you would like to see before you load it, based on the discussions in and around the home. Virtual assistants will become a necessity to plan your daily schedule and to keep track of any appointments. They will also be incredibly useful with our ever-growing diversity and using text-speech or speech-speech technology to allow people from all walks of life to be able to communicate with each other regardless of language barriers.

The biggest change we would need to get used to, or at least find ways of handling effectively, would be a major impact on our privacy. With Machine Learning having immense amounts of data at hand and being able to learn and grow, potentially compiling more data and creating mega-servers, it will only be a matter of time until nothing is secret. The only other option would be for individuals to be much more careful of the information they choose to make public.

Everyone will be impacted by this; every company will be using Machine Learning algorithms to improve efficiency and to target a bigger audience. I currently work at a restaurant, and I can imagine a Machine Learning program that would help sort through a customer database to better advertise an upcoming special based on each customers prior dining experiences.

Whilst Machine Learning isn’t by any means a new technology, the recent jumps in processing power now makes it a more increasingly useful technology. The future is looking more and more robotic that’s for sure.